Introduction

Automated grading is one of the most contentious applications of artificial intelligence, which is quickly changing lecture halls and classrooms. A crucial question at the heart of this debate is whether or not AI grading can be fair. Teachers and researchers balance the potential benefits and drawbacks of these tools as universities test them.

What Is AI Grading?

The term “AI grading” refers to the use of artificial intelligence systems to evaluate student assignments, tests, and projects rather than depending exclusively on human instructors, these systems analyze responses and assign scores. These systems are most frequently used in large scale courses where grading demands are overwhelming. At its core, AI grading functions through algorithms designed to replicate or supplement human evaluation. For instance, an essay submitted by a student may be processed through natural language processing (NLP) tools, which break down grammar, structure, vocabulary, and argumentation similarly, multiple choice exams may be accurately and efficiently.

AI grading is being used by higher education institutions to handle a variety of tasks. While universities investigate machine learning systems to evaluate essays or lab reports, online learning platforms frequently use AI to grade quizzes and short answers. Fairness, consistency, and quicker turnaround are the goals of these platforms.

However, the main point of contention is whether AI grading systems can accurately and impartially capture the breadth of students’ learning and creativity. Determining whether fairness can actually be attained in academic settings requires an understanding of the mechanisms by which AI assesses work.

Why Use AI in Grading?

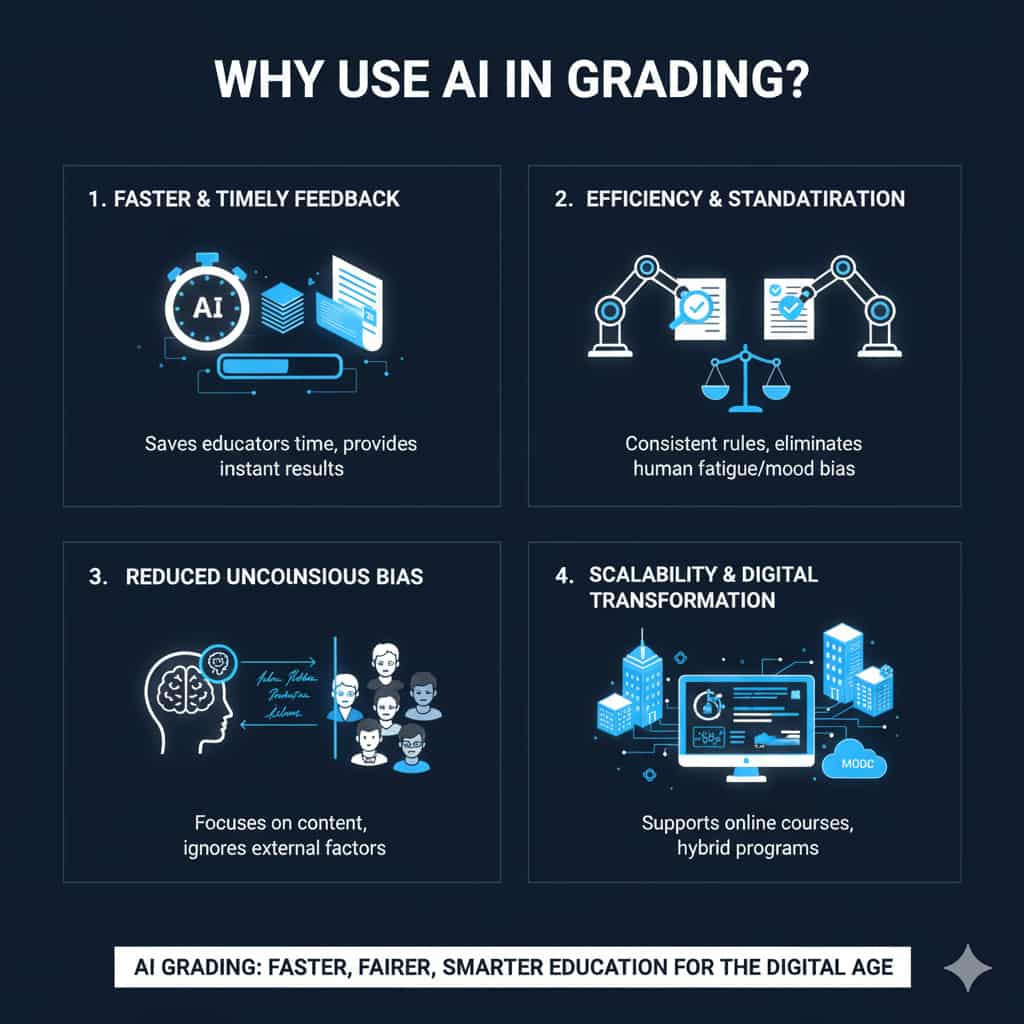

The urgent issues facing contemporary education are the driving force behind the shift to AI in grading. First, it saves teachers a great deal of time. It is impractical for instructors overseeing hundreds of students to give thorough and prompt feedback on each assignment. On the other hand, AI can produce results in a matter of seconds.

Second, AI systems encourage uniformity and efficiency. Human graders can differ in how strict or lenient they are, frequently based on things like weariness or mood. The same criteria are applied uniformly to all submissions by automated grading systems. Many institutions are optimistic that AI grading fairness could surpass traditional grading because of its perceived neutrality.

Third, unconscious human biases may be lessened by AI. For instance, a teacher might unintentionally be impacted by a student’s reputation, handwriting, or accents. A well trained AI system prioritizes content over outside influences.

Lastly, AI grading is consistent with higher education’s digital transformation. Massive open online courses (MOOCs), hybrid programs, and online courses all need scalable solutions that are not supported by traditional grading. Institutions can meet these increasing demands with the help of automated grading.

Even with these benefits, there are still unanswered questions: Does quicker grading equate to more equitable grading? Can sophisticated reasoning or creativity really be assessed by machines? These inquiries help us understand the more intricate workings of AI grading.

How Does AI Grading Work?

Natural language processing, machine learning, and automated scoring frameworks are all used in AI grading systems. Every element contributes to the results, which in turn affects the fairness of AI grading.

1. Natural Language Processing (NLP).

NLP divides text into quantifiable parts for essays. Grammar, syntax, coherence, vocabulary usage, and argument structure are all examined. Some systems even make an effort to use semantic analysis to measure originality or creativity. But NLP frequently has trouble with abstract ideas or subtle cultural differences.

2. Machine Learning Models.

Large datasets of previously graded work are used to train AI grading tools. The algorithm “learns” the standard scores for particular aspects of writing or problem solving. The likelihood of obtaining fair results increases with the diversity and balance of the training data. However, inequality can be sustained by biased or constrained training sets.

3. Rubrics and Automated Scoring.

A lot of AI graders use pre programmed rubrics that are in line with the objectives of the course. With the help of these rubrics, the system is able to allocate points according to observable standards like organization, clarity, or evidence use. Although effective, their emphasis on quantifiable, surface level characteristics runs the risk of oversimplifying learning objectives.

4. Continuous Feedback Loops.

Human oversight and ongoing model updates are features of advanced platforms. By ensuring that the AI stays in line with human judgment, this hybrid approach minimizes disparities that could jeopardize the fairness of AI grading.

In reality, AI grading is a multi layered system of algorithms rather than a single technology. How well these systems are created, overseen, and implemented in particular educational contexts has a significant impact on their efficacy.

Benefits of AI Grading

Adopting AI grading systems has a number of important advantages, many of which directly promote equity and fairness in education.

1. Consistency Across Submissions.

Depending on the day or the student’s identity, human graders might unintentionally apply different standards. On the other hand, AI applies rules consistently. The argument for fair AI grading is strengthened by this consistency, especially in large classes.

2. Speed and Scalability.

Thousands of assignments can be processed by AI in a matter of minutes. Students receive feedback more quickly thanks to this quick turnaround, which speeds up their improvement. Delaying grading, on the other hand, can impede learning.

3. Supporting Overburdened Teachers.

Teachers frequently have a lot of grading to do. AI allows them to concentrate on areas where human expertise is invaluable, such as mentoring, curriculum design, and individualized student support, by automating routine assessments.

4. Potential to Reduce Human Bias.

AI can reduce some biases, but no system is perfect. For instance, it ignores cultural stereotypes, student reputation, and handwriting. Diverse student populations can benefit from leveling the playing field with the aid of well trained models.

5. Adaptability to Large Scale Assessments.

Human grading is not feasible in large scale open online courses or nationwide testing scenarios. AI provides scalable solutions without sacrificing consistency or turnaround time.

Institutions are still experimenting with automated grading tools because of these advantages. But there are drawbacks to every benefit. Every strength is accompanied by difficulties that raise doubts about the fairness of AI grading.

Challenges and Concerns with AI Grading

The promise of fairness is complicated by a number of issues with AI grading, despite its benefits.

1. Algorithmic Bias and Training Data.

The fairness of AI depends on the data it uses to learn. The AI will inherit and replicate any bias found in previous grading data, whether it be due to linguistic, cultural, or institutional preferences. The fairness of AI grading is directly compromised by this.

2. Lack of Transparency.

AI grading frequently operates as a “black box,” making it difficult for teachers and students to comprehend how scores are calculated. It’s challenging to believe that outcomes are accurate or fair in the absence of transparency.

3. Overemphasis on Measurable Metrics.

AI struggles to assess originality, creativity, or critical thinking while rewarding grammar, structure, and formulaic writing. This raises serious questions about the fairness of evaluating higher order skills since students who think creatively may be penalized.

4. Student Trust and Acceptance.

For students, grades are more than just numbers; they have an impact on future prospects, motivation, and self confidence. Students may lose faith in the educational system as a whole if they believe AI grading is unfair. AI grading fairness depends on trust, and skepticism is still pervasive.

5. Ethical and Legal Considerations.

Academic integrity policies, accountability for mistakes, and privacy concerns are all issues that educational institutions must deal with. Who is at fault if a student is unfairly penalized by an AI system the school, the software developer, or the instructor?

6. Accessibility and Equity.

If AI is unable to identify non standard expressions or alternative methods, students from a variety of linguistic and cultural backgrounds may be disproportionately impacted. This calls into question whether AI grading fairness can be applied universally.

In conclusion, even though AI provides consistency and speed, these advantages are frequently overshadowed by worries about ethical responsibility, bias, and transparency. The question of whether AI grading can ever be genuinely fair remains unanswered until these issues are resolved.

Is AI Grading Truly Fair and Unbiased?

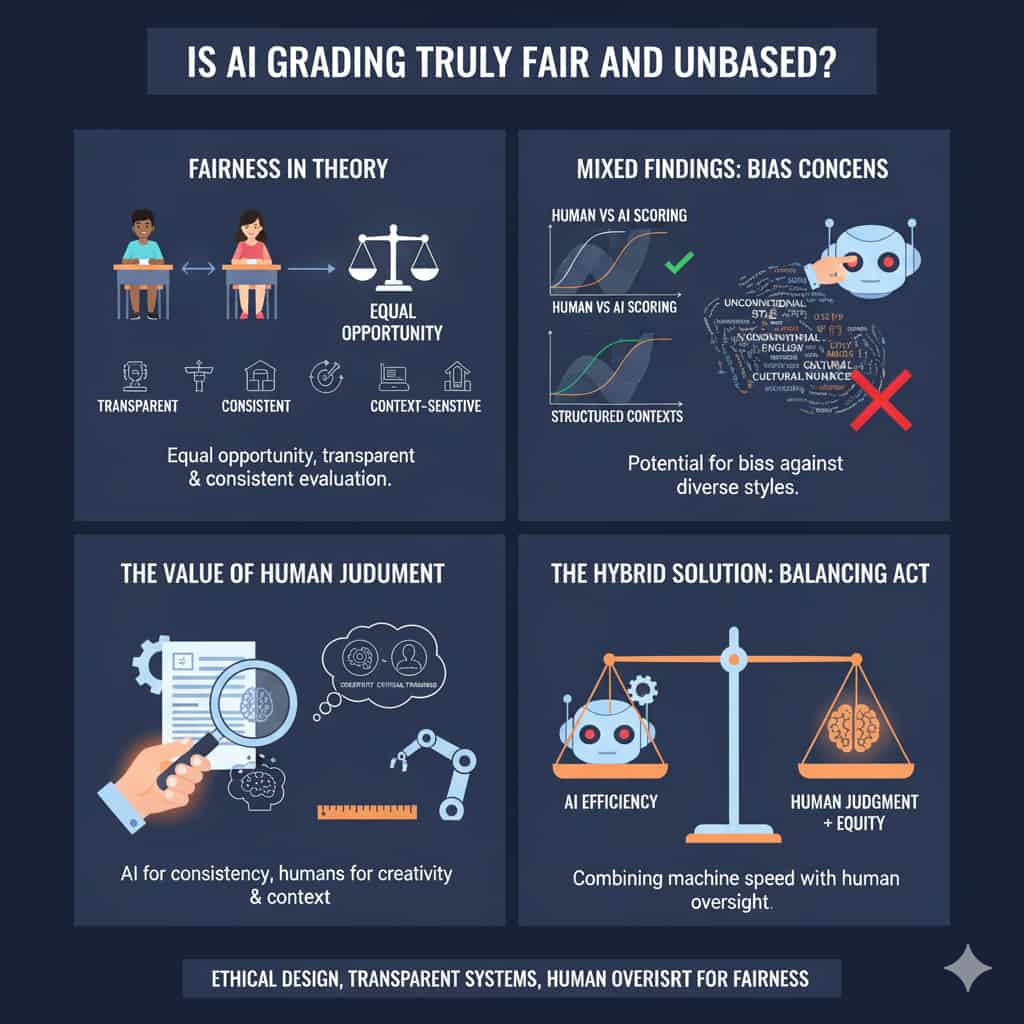

We must first define fairness in assessment before we can respond to the question of whether AI grading is actually fair. Fairness is frequently defined in educational theory as giving every student an equal chance to demonstrate their learning without institutionalized bias. Fairness in AI also refers to evaluations that are transparent, consistent, and context-sensitive.

The results of the research are conflicting. According to certain research, AI graders closely match human scores, especially when it comes to standardized essays. This implies the possibility of fairness in situations that are predictable and structured. However, other studies point out prejudices against non native English speakers, unusual writing styles, or arguments that are culturally specific. These differences show how context and dataset quality have a significant impact on AI grading fairness.

Critics contend that statistical consistency alone is insufficient to ensure fairness. The values ingrained in assessment design are also taken into account for true fairness. AI grading runs the risk of limiting the definition of academic excellence if it places more emphasis on quantifiable grammar and structure than on originality or nuanced argumentation.

Proponents argue that the best chance of attaining fairness is provided by hybrid systems, in which AI does the initial scoring and humans review the final results. Institutions can strike a balance between speed and equity by fusing human judgment with machine efficiency.

In the end, AI grading is neither intrinsically fair nor unfair. The way systems are created, trained, and managed determines how fair they are. The topic of discussion is not whether or not AI should grade, but rather how to guarantee fairness in AI grading through moral behavior, open design, and ongoing human supervision.

How to Make AI Grading More Fair and Transparent

It takes deliberate design, oversight, and policy frameworks to guarantee fairness in AI grading.

1. Use Diverse and Representative Training Data.

A diverse range of student populations, writing styles, and cultural contexts should be represented in training data. This improves AI grading equity in diverse classrooms and lowers the possibility of systemic bias.

2. Implement Human Oversight.

Students benefit from both efficiency and contextual sensitivity thanks to hybrid models, in which AI generates initial scores and humans verify outcomes. Additionally, oversight fosters trust between teachers and students.

3. Regular Auditing of AI Systems.

Biases, errors, and unforeseen consequences can be found through ongoing assessment and independent audits. Instead of treating AI grading as a one time installation, institutions should view it as a process that needs to be updated on a regular basis.

4. Transparent Communication with Students and Educators.

Building confidence is facilitated by outlining the limitations of AI grading as well as the function of human review. When students comprehend the reasoning behind the results, they are more likely to accept them.

5. Develop Ethical Guidelines and Policy Frameworks.

It is crucial to have clear policies regarding data privacy, accountability, and error correction. Institutions must make it clear who is accountable for errors and how students can challenge outcomes.

Universities can get closer to attaining fair AI grading while upholding academic integrity and student confidence by implementing these procedures.

Read more: Should AI be used for grading schoolwork?

The Future of AI in Educational Assessment

AI will probably play a bigger part in grading in the future. One of the main obstacles to AI grading fairness may be addressed by emerging technologies like explainable AI, which promise to make algorithms more transparent and intelligible.

Advanced semantic analysis may be incorporated into future systems, allowing machines to assess creativity, nuance, and cultural context more accurately. Additionally, by customizing grading rubrics to particular curricula, adaptive models could provide more individualized evaluations without sacrificing consistency.

Read more: AI Graders: The 5 Best AI Essay Graders for Teachers in 2025 …

But rather than being entirely automated, AI grading will probably be hybrid in the future. When evaluating sophisticated abilities like critical thinking, creativity, and ethical reasoning, human judgment is still crucial. Finding the ideal balance between human insight and machine efficiency is difficult.

Fairness must continue to be at the core of innovation as educators and legislators continue to mold the role of AI in classrooms. AI has the potential to support a more equitable educational system in addition to speeding up grading.

Read more: Can AI Replace Doctors (Future of Healthcare) Best of 2025

Frequently Asked Questions

1. Can AI completely replace human graders?

No. While AI can handle routine tasks efficiently, human graders remain essential for evaluating complex skills like creativity, ethics, and nuanced reasoning.

2. What types of assignments are best suited for AI grading?

Structured tasks like multiple choice exams, coding exercises, and formulaic essays are well suited. Open ended, creative work still requires human evaluation.

3. How do educators check if AI grading is fair?

By comparing AI scores with human grading samples, auditing results regularly, and ensuring training datasets are diverse and unbiased.

4. What role does bias in training data play?

Training data directly influences outcomes. If the dataset is biased, the AI will reflect and amplify those biases, undermining AI grading fairness.

5. Are AI graded results legally valid in academic institutions?

Yes, but institutions must ensure compliance with policies on transparency, accountability, and student rights to appeal scores.

6. Do students trust AI grading systems?

Trust varies. Students often question whether AI can understand creativity or nuance. Transparency and hybrid grading models can improve trust.

7. Can AI grading be customized to fit different curricula?

Yes. Many systems allow rubrics to be tailored to specific learning outcomes, improving alignment with course goals.

8. What happens when AI makes mistakes?

Institutions must have review processes and appeal mechanisms in place. Human oversight ensures errors can be corrected.

9. How are universities currently using AI in grading?

Many institutions use AI for large scale testing, online learning platforms, and preliminary essay scoring, often combined with human review.

10. Will future AI systems solve fairness and bias concerns completely?

Not entirely. While advancements will reduce bias, complete fairness may be impossible. Human oversight will always be necessary.